Towards the AI Patient

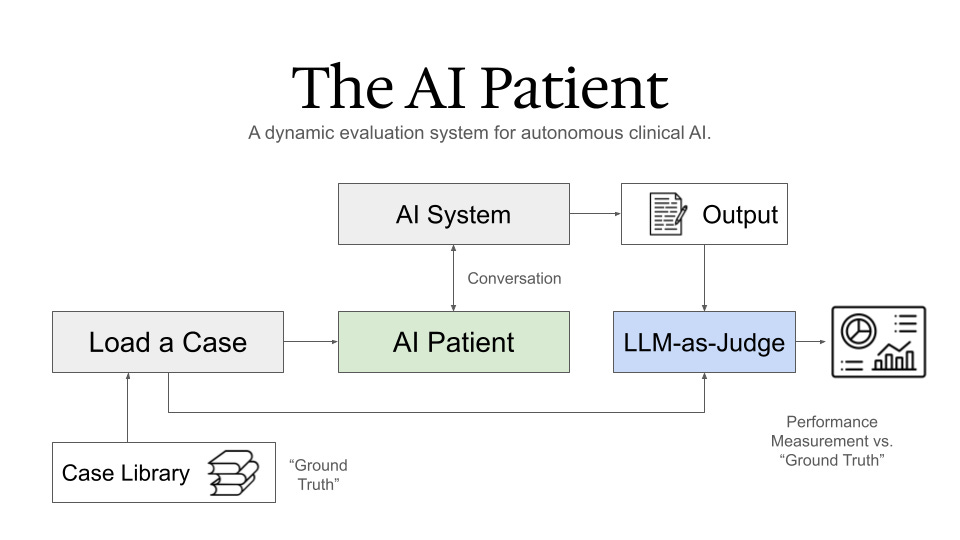

A dynamic evaluation system for autonomous clinical AI.

The next big unlock in AI evaluation won’t come from better tests.

It will come from a better patient - the AI Patient.

I spend a lot of time evaluating AI systems for care delivery, and the current methods have reached their limit.

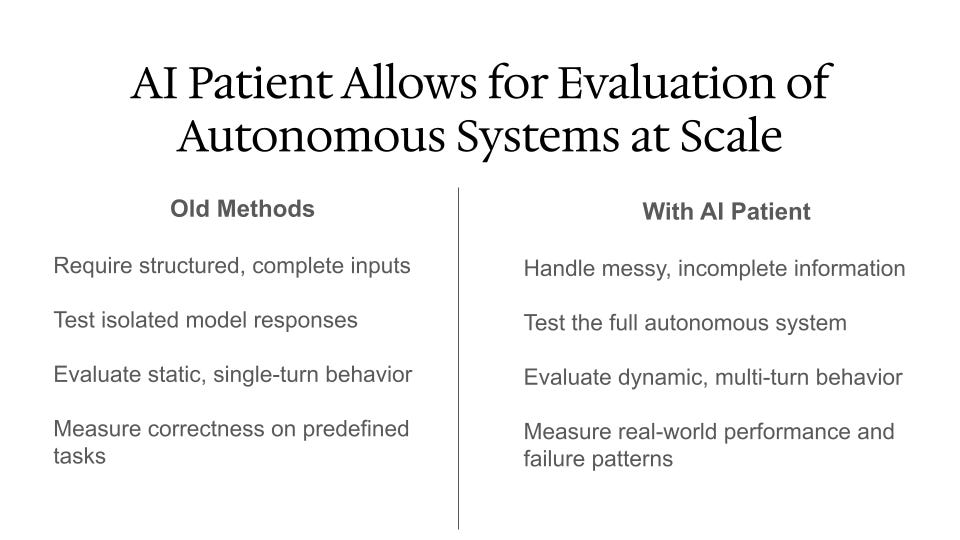

Today, we still grade clinical AI with knowledge exams and structured cases.

MedQA, MedR Bench, etc - these are helpful but constrained.

Useful for stress-testing a single model.

Useless once the system becomes agentic, conversational, and deployed in the messy reality of care.

Because real patients don’t hand us well-structured clinical information.

They don’t offer their labs up front.

And they almost never say the one sentence the model “needs” to get the question right.

Current evaluation methods are also designed for an outdated purpose - testing a model’s medical knowledge.

But new agentic systems aren’t built to “take a test.”

They’re built to interact with real patients - iteratively, imperfectly, like clinicians do. And we need to know well how they’re doing at that.

This is why we need the AI Patient.

An AI that can behave like a patient across thousands of known cases, each with ground-truth diagnoses and management paths.

It selects a case, then starts a conversation.

The system goes to work case-by-case, repeating new presentations across easy, hard, and rare situations.

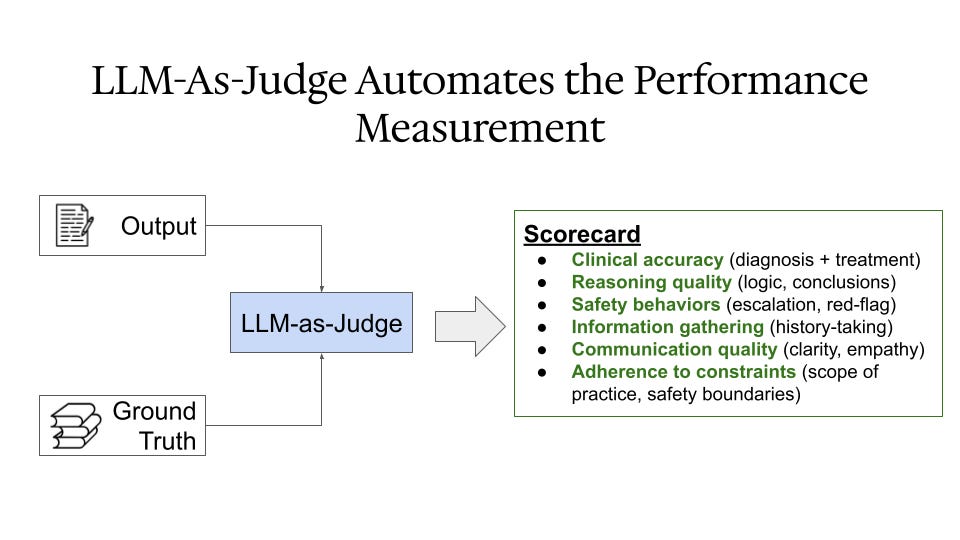

When the visits are done, an LLM-as-a-judge reviews the interactions to flag safety issues, missed cues, or reasoning errors. These get funneled back into a dashboard for review by the development team.

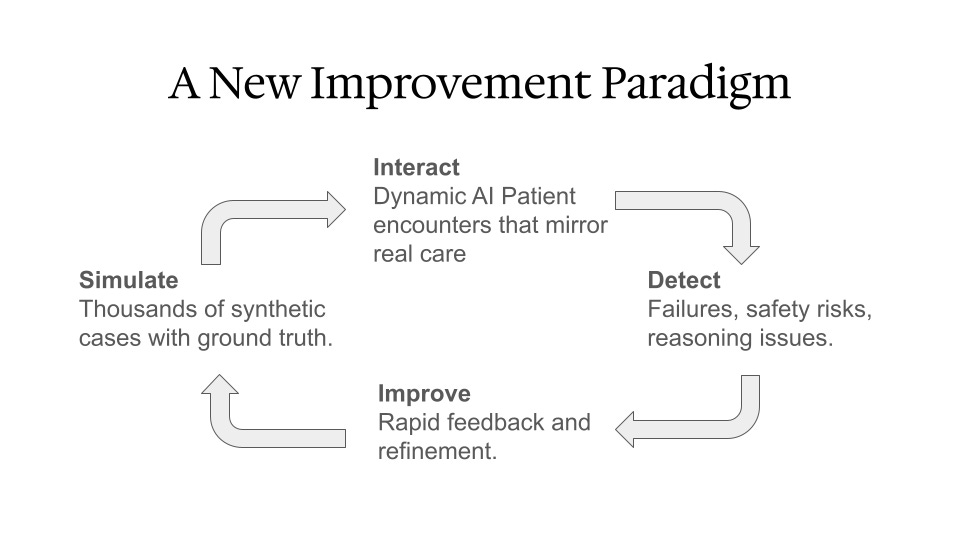

Test → re-test → refine.

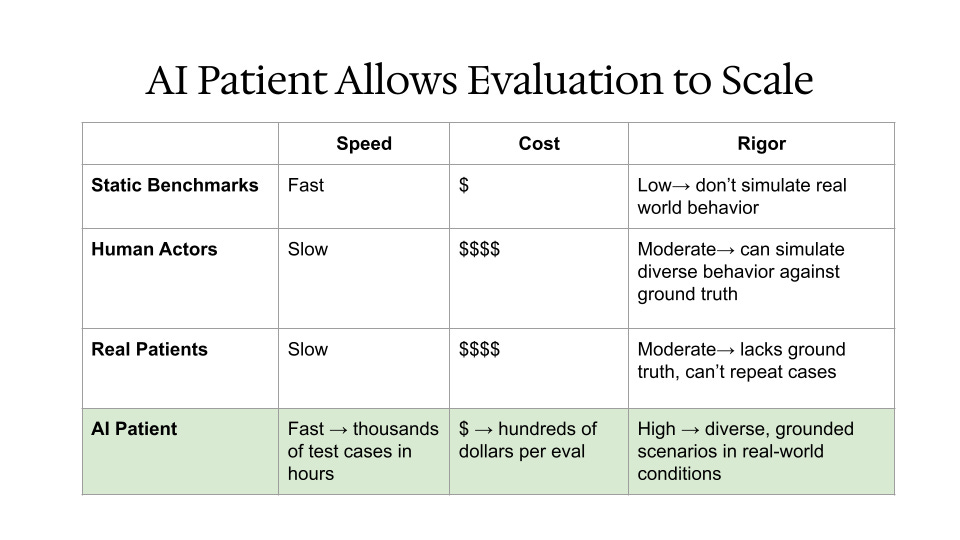

This becomes a continuous loop that costs a few hundred dollars and runs in minutes.

It also mirrors how human clinicians learn:

See more patients.

Get expert feedback.

Close the gaps.

I believe the AI Patient will become the standard for evaluating AI systems in care delivery.

What capabilities would you want the AI Patient to have?