Care Worthiness

A decision standard for responsible deployment of AI systems in care delivery

The Challenges Holding Back Clinical AI Adoption

AI systems are increasingly embedded directly into care delivery and are performing advanced functions including history taking and clinical reasoning. There is enormous potential to expand the scope of these tools. However, organizations face increasingly complex decisions about when, where and how AI can or should be deployed.

Numerous groups have developed frameworks to help organizations make decisions about whether to use AI in healthcare applications. However, these frameworks suffer from three major limitations that hamper effective decision making:

Current frameworks overwhelmingly focus on evaluating technical performance relative to other important domains

They do not specify what value system applies when making adoption decisions

There is no defensible standard for basing an adoption decision

The consequences of these constraints are predictable - evaluations focus on whether AI ‘passed the test,’ and then stakeholders apply different values and norms to debate whether the results met a threshold that was never defined.

In this environment, progress understandably stalls. At the organizational level, no one can agree on what ‘good’ looks like leading to endless internal debate. At the industry level, variation in adoption decisions create fear that first-movers will be unfairly punished. At the national level, this leads to a slowing in the pace of advancement in technologies that could greatly benefit patients.

There is a need for a better method that can overcome these limitations and promote action from organizations and their leaders. We don’t just need better metrics, we need better decision logic.

Towards Care Worthiness: A New Decision Standard for AI Adoption

Care Worthiness is a new method for making decisions on AI adoption in healthcare by anchoring deployment to commonly accepted standards of professional entrustment. This allows for richer conversations about the specific role of an AI system in care and a determination of whether that role is acceptable based on the application of a moral standard, not simply technical one. Teams applying a Care Worthiness lens are able to see decisions in a new light because the standard governs overall permissibility, not raw performance. If a system is deemed Care Worthy, teams can feel confident that they are acting in a way that is aligned with societal values.

Care Worthiness extends the logic of professional entrustment to AI systems. When evaluating care worthiness, organizations define the role that a given AI system is intended to take on in the care journey, then assess whether the AI system is worthy of that role given current knowledge on performance, consideration of the degree of risks involved in deployment, and the existing safeguards to manage and mitigate those risks. Any determination of acceptability must meet not only a technical standard, but the moral one - it must feel like a responsible, grounded choice given the sum of available knowledge.

This is not a relaxation of standards - professional entrustment is a demanding process that includes multiple layers of accountability including patients, peers, supervisors and regulators. Care Worthiness applies medicine’s own high standards for delegating clinical work to AI systems. Additionally, it also recognizes that knowledge about any system will always be imperfect, but that clinically-grounded, morally responsible and institutionally defensible decisions can still be made even without perfect information

Why a Moral Standard is Needed for Seemingly Technical Decisions

Technical evaluations can tell us important information such as accuracy, robustness, bias and failure modes. But they cannot answer moral questions like how much variation or potential for error is acceptable, who should bear the risk and responsibility of system performance, what level of supervision is sufficient, and whether the role itself is appropriate. These are normative questions, and in medicine normative questions are settled by a combination of professional codes, accountability structures and individual moral reasoning.

The lack of a moral lens through which to interpret current AI performance evaluations is why so many organizations feel stuck ever after the evaluations are in. Without a clear moral lens, each decision feels existential - that even minor disagreements on acceptability might snowball into major ruptures within organizations, or worse, that participants might be on the wrong side of history.

There is no doubt that technical evidence must be inputs into any framework evaluating AI, but they must be in service of moral reasoning, not a substitute for it. Technical evidence may illuminate what is possible, but Care Worthiness determines what is permissible.

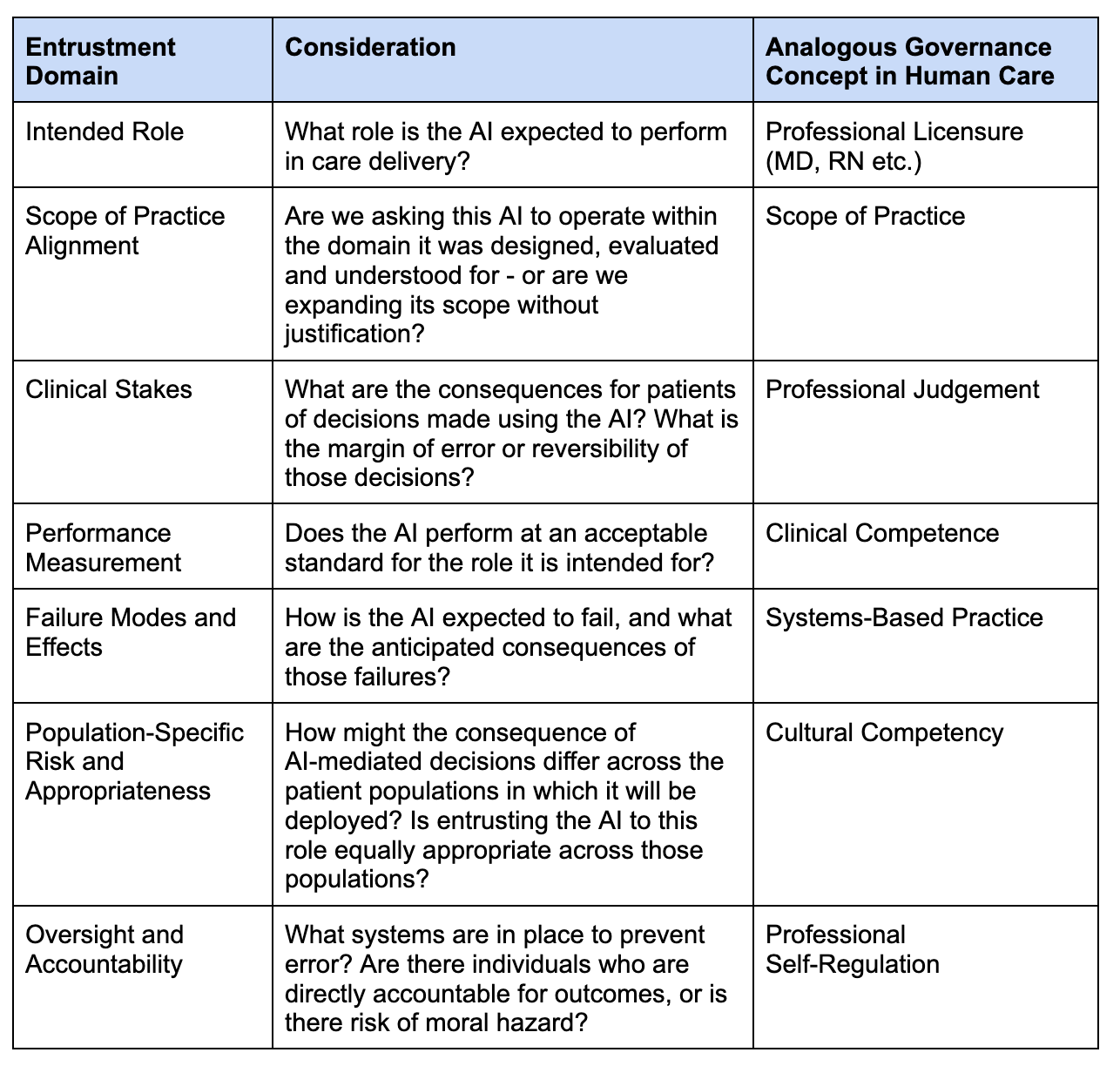

Key Input Domains to Determine Care Worthiness

These domains are not a checklist to determine Care Worthiness - rather, they inform a judgment about whether delegating a role to AI is morally responsible given the existing knowledge about the system.

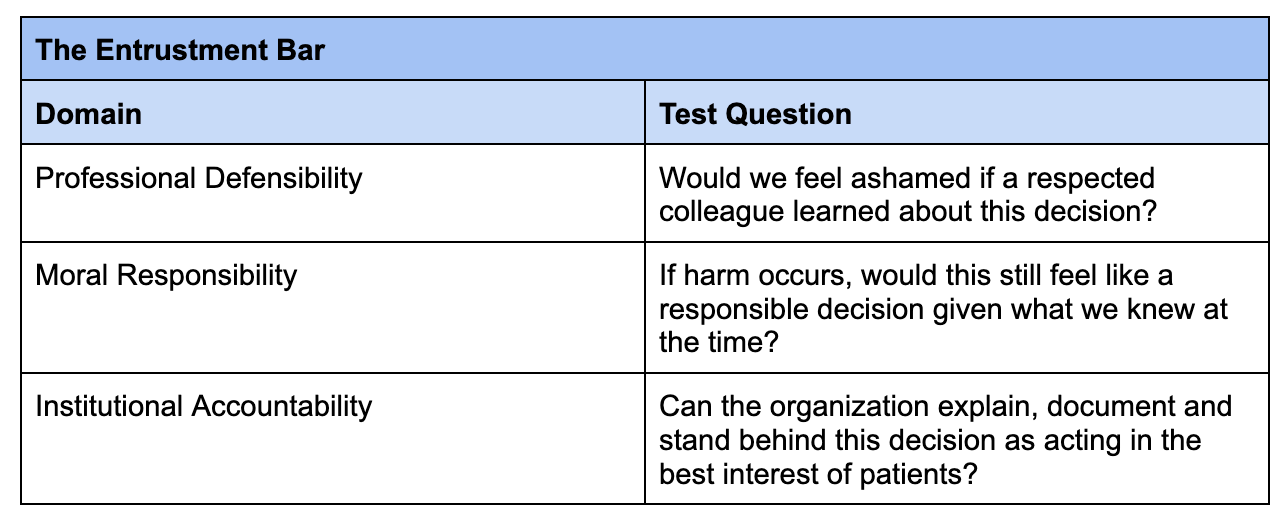

Determining Care Worthiness: The Entrustment Bar

An AI system passes the entrustment bar for Care Worthiness if a reasonable clinician and organization could defend the decision to entrust it with this role - before patients, peers and regulators - given the known risks, safeguards and consequences of failure.

This determination can be made by applying the 3-part test below.

The Entrustment Bar

Towards a Care Worthiness Standard

The bigger problem in determining whether AI can be used in care delivery is not simply lack of evaluation, but decision paralysis. When standards are opaque and devoid of moral authority - especially in healthcare - well-meaning differences in perspective become hard-stop blockers to progress.

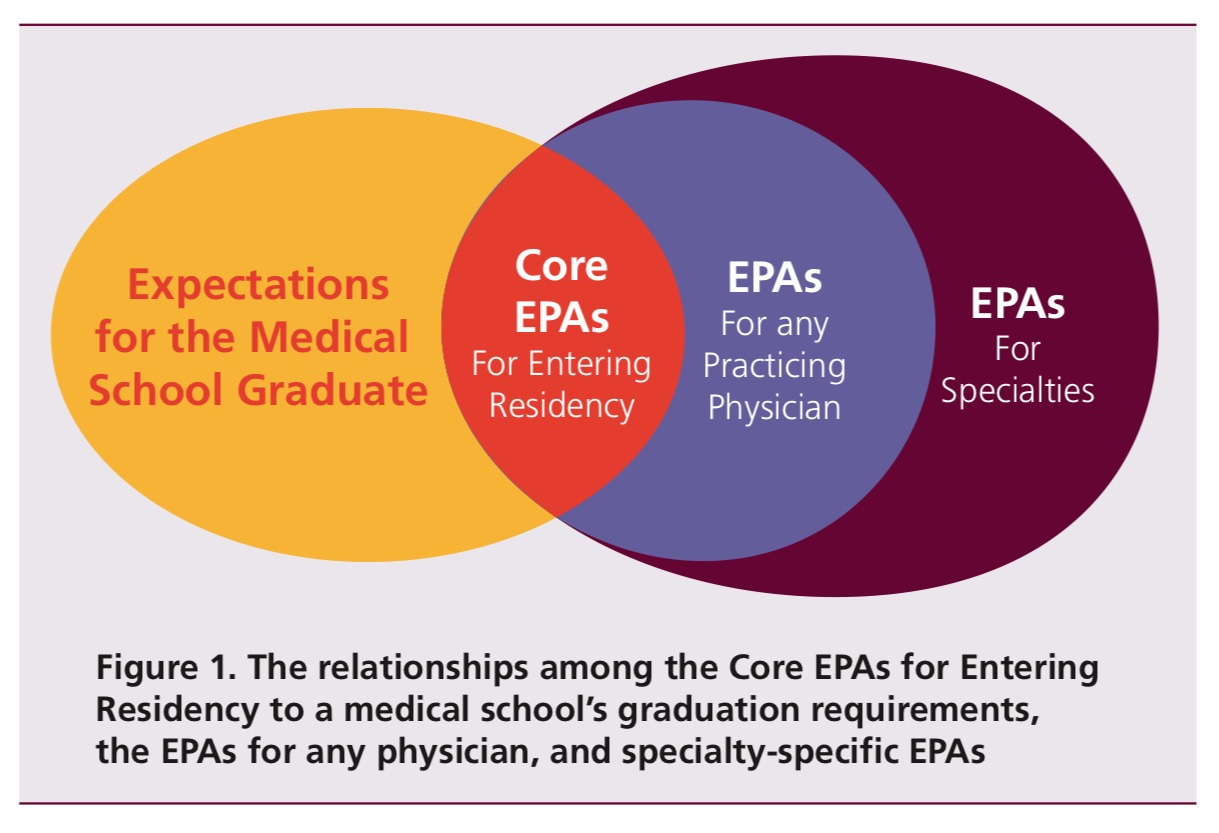

The good news is that medicine has already solved this kind of problem through entrustment. Clinicians and other healthcare professionals are subject to evaluation on multiple domains - both technical and subjective - before being deemed as worthy of a clinical role. Even then, their scope is defined, and they exist within robust systems that protect against variation and error.

The definition of Care Worthiness can evolve, as can evaluation criteria. But as a standard, it offers a path to responsible progress. Care Worthiness offers a new way forward that neither freezes innovation nor abandons responsibility - by grounding decisions about AI participation in the same logic of entrustment that has long framed how medicine delegates care.

The reality is that healthcare has always been governed by moral standards first, and technical standards second. Care Worthiness restores the correct order when it comes to deploying AI in care delivery.

Interesting framing-- how do you think about the concept of the need for blame and/or liability? Both the moral and institutional tests approach an answer: was a decision responsible and defensible, but dance around the question of who is responsible for any harm created. Is there a meaningful distinction between an AI decision and an AI action? What happens when the decision is correct but the execution fails?